The quiet moment a prototype becomes permanent

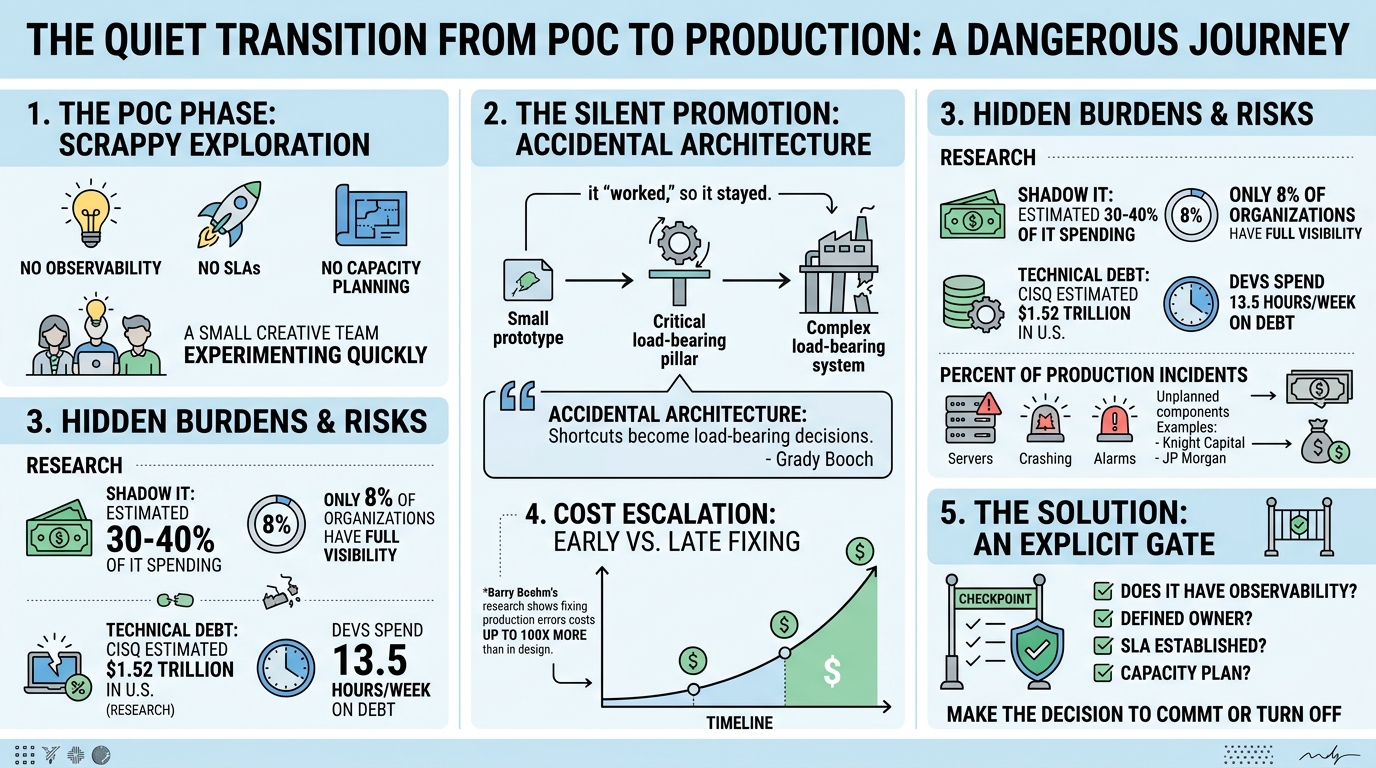

A few months ago I watched a team demo a proof of concept they'd built in two weeks. Clever work. No monitoring, no SLAs, no capacity planning. Just a fast, scrappy experiment to test a hypothesis.

Six months later that same system was processing customer transactions in production. Nobody made a decision to promote it. It just... stayed.

Grady Booch called this "accidental architecture" back in 2006. Every shortcut taken during exploration becomes a load-bearing architectural decision by default. Not because anyone chose it, but because nobody stopped it.

The scale is hard to overstate. Gartner estimates shadow IT accounts for 30 to 40 percent of IT spending in large enterprises. Only 8 percent of organizations report full visibility into their shadow IT footprint. IBM's 2025 Cost of a Data Breach Report found shadow AI was a factor in 20 percent of breaches, adding $670,000 to average breach costs.

The real problem runs deeper than security. A POC represents roughly 20 percent of the total journey to production. The other 80 percent is scalability, observability, compliance, reliability. AIM Consulting estimates teams should expect to replace 50 to 80 percent of original POC code to meet production standards. When that never happens, you get architectural debt.

The numbers are staggering. CISQ estimated accumulated software technical debt in the U.S. at $1.52 trillion. Stripe's research showed developers spend about 13.5 hours per week dealing with it. Their managers were generally unaware.

The case studies are instructive. Knight Capital lost $460 million in 45 minutes because decommissioned code from 2005 was accidentally reactivated through a repurposed feature flag. JP Morgan's London Whale losses of $6.2 billion traced back to a Value at Risk model running through Excel with manual copy-paste between files. Their own internal report said the process "should be automated." Nobody automated it.

A Cortex survey found 32 percent of engineering leaders have no formal process for reviewing software alignment to standards after initial launch, addressing problems only after incidents. Yet 98 percent of those same leaders have witnessed significant negative consequences from failing to meet production readiness standards.

Barry Boehm's research showed fixing a requirements-level error in production can cost up to 100x more than fixing it during design.

The transition from experiment to commitment needs an explicit gate. Not a heavyweight governance board. Just a clear, deliberate moment where someone asks: does this system now have observability, an owner, an SLA, and a capacity plan? If the answer is no, it either gets those things or gets turned off.

Most organizations have nothing like this. The most dangerous moment in a system's lifecycle is not when it fails. It is the quiet moment when someone decides it "works well enough" and moves on to the next sprint.

When prototypes quietly become production: the research behind the risk

The pattern is well-documented and alarmingly common: proof-of-concept systems built under exploratory constraints — no observability, no SLAs, no capacity planning — silently graduate into load-bearing production infrastructure simply because they "worked." Research from Gartner, McKinsey, IBM, and RAND confirms this creates a compounding crisis of technical debt, security exposure, and fragile architecture. The data below provides a factual foundation for each angle of the post, organized by theme.

The scale of ungoverned systems hiding in plain sight

Shadow IT — the umbrella category that encompasses most unsanctioned POCs — is far larger than most leaders realize. Gartner consistently estimates shadow IT accounts for 30–40% of IT spending in large enterprises, a figure Everest Group's CEO Peter Bendor-Samuel calls an understatement, suggesting the real number exceeds 50%. BetterCloud found the number of SaaS applications on corporate networks was roughly 3× what IT departments knew about, and Productiv's 2022 analysis showed shadow IT represented 42% of all applications in a typical company portfolio.

The human behavior driving this is accelerating. Gartner's 2023 research found 41% of employees acquire, modify, or create technology outside IT's visibility — and projects that figure will reach 75% by 2027. The emerging "shadow AI" trend amplifies the problem: Microsoft and LinkedIn's 2024 Work Trend Index revealed 78–80% of knowledge workers already use personal AI tools at work, with 70% hiding their ChatGPT usage from employers. Forrester predicted 60% of employees would adopt AI tools without IT approval in 2024. Only 8% of organizations report full visibility into their shadow IT footprint, and 80% have no formal process to audit shadow IT applications regularly.

These ungoverned systems carry real costs. IBM's 2025 Cost of a Data Breach Report found shadow AI was a factor in 20% of breaches, adding $670,000 to average breach costs. In 2024, IBM reported 35% of all data breaches involved shadow data, with those breaches costing $5.27 million on average — 16% higher than the norm — and taking 26% longer to identify. Kaspersky's 2024 research attributed 11% of all cyber incidents directly to unauthorized shadow IT usage.

The missing gate between experiment and production commitment

The organizational failure at the heart of the POC-to-production problem is the absence of explicit transition gates. A Cortex 2024 survey of 50 engineering leaders at companies with 500+ employees found that 32% have no formal process for reviewing software alignment to standards after initial launch — they only address issues after incidents occur. Yet 98% of those same leaders have witnessed at least one significant negative consequence from failing to meet production readiness standards. The most common consequences: 62% saw increased change-failure rates, 56% saw longer mean time to resolve incidents, and 54% reported decreased developer productivity.

The confidence gap is telling. Average confidence in production readiness programs rated just 6.4 out of 10. Teams tracking standards via spreadsheets were 4× less likely to feel confident than those using Internal Developer Portals, and organizations without continuous evaluation saw their change-failure rates increase at a rate of 94% compared to 38% for those with continuous assessment. Only 16% employ continuous monitoring of production readiness at all.

The AI/ML domain offers the most heavily studied version of this problem. IDC found that 88% of AI POCs never reach widescale deployment — for every 33 AI POCs launched, only 4 graduated to production. S&P Global's 2025 survey of over 1,000 respondents showed the average organization scrapped 46% of AI proof-of-concepts before production, and 42% of companies abandoned most AI initiatives entirely (up from 17% the prior year). Gartner's May 2024 data puts the broader AI success rate at 48% reaching production, with an average 8-month journey from prototype to production for those that do survive. Meanwhile, RAND Corporation found AI project failure rates are nearly double those of traditional IT projects. The pattern is clear: organizations are running experiments at scale but lack the institutional machinery to either promote or kill them decisively — creating "pilot purgatory."

Multiple sources converge on a critical insight: a POC represents only about 20% of the total journey to production. The remaining 80% involves non-functional requirements — scalability, security, compliance, reliability, and observability. AIM Consulting estimates teams should anticipate replacing 50–80% of original POC code to meet production standards. When that replacement doesn't happen, you get what Boeing's enterprise software engineering team calls "reified prototyping" — solutions built for initial capability demonstrations that get evolved into production systems without the process rigor required, resulting in high-cost re-engineering downstream.

Four case studies where prototypes became catastrophes

Knight Capital Group (August 2012): $460 million lost in 45 minutes. Knight Capital's trading system contained decommissioned code called "Power Peg" — dead since 2005 but never removed from the codebase. During a deployment for NYSE's new Retail Liquidity Program, developers repurposed an old feature flag that had once controlled this dead code. One of eight servers failed to update correctly, silently retaining the old code. When markets opened, the flag reactivated the broken Power Peg logic on the lagging server, executing trades that bought at ask prices and sold at bid prices — instantly losing money on every transaction. The system produced 4 million erroneous trades across 154 stocks in 45 minutes. Engineers attempting recovery rolled back to the old version on all eight servers, which activated the defective code everywhere, accelerating the disaster. Knight had no documented incident response procedures. The company's stock dropped 75%, it required $400 million in emergency funding, and was acquired by Getco within months.

JP Morgan "London Whale" (2012): $6.2 billion from an Excel spreadsheet. The Value at Risk model underpinning JP Morgan's Chief Investment Office hedging strategy operated through a series of Excel spreadsheets completed manually by copying and pasting data between files. JP Morgan's own 130-page internal report acknowledged the process "should be automated" — but it never was. The report documented that the model developer "had not previously developed or implemented a VaR model," that "spreadsheet-based calculations were conducted with insufficient controls and frequent formula and code changes," and that a critical formula error divided by a sum instead of an average, drastically underestimating risk. A prototype-quality tool was making multi-billion-dollar risk decisions.

AWS S3 outage (February 2017): 4 hours, significant portion of the internet down. An authorized engineer debugging a billing issue executed an internal command intended to remove a small number of servers, but an incorrect input caused a far larger set to be removed. The internal operational tool allowed too much capacity to be removed too quickly with no safety checks. This triggered a full restart of the S3 index and placement subsystems. AWS's own postmortem admitted: "We have not completely restarted the index subsystem or the placement subsystem in our larger regions for many years" — meaning the restart behavior was essentially untested at production scale. The tool, built for internal operations without the rigor of customer-facing software, took down Netflix, Slack, Adobe, Reddit, and dozens of others. Even the AWS Service Health Dashboard couldn't update because it depended on S3.

Facebook/Meta global outage (October 2021): 6–7 hours offline. During routine backbone maintenance, a command accidentally disconnected all of Facebook's data centers. An internal auditing tool designed to prevent such misconfigurations contained a software bug that allowed the destructive change to proceed. The cascade was total: internal diagnostic tools went down, preventing remote troubleshooting. Employee badge systems — dependent on the same infrastructure — stopped working, physically blocking engineers from entering buildings. Facebook stock dropped roughly 5%, Mark Zuckerberg's wealth fell by $6 billion in a day, and the outage generated over 10 million problem reports on Downdetector, the largest single event in that platform's history.

Technical debt compounds when architecture is accidental

The financial weight of technical debt from ungoverned systems is staggering. CISQ's 2022 report estimated the cost of poor software quality in the U.S. at $2.41 trillion, with accumulated software technical debt alone at $1.52 trillion. McKinsey's survey of CIOs at billion-dollar companies found technical debt accounts for roughly 40% of their entire IT balance sheets, with companies paying an additional 10–20% on every project to work around existing debt. One North American bank McKinsey studied found its 1,000+ systems generated over $2 billion in tech-debt costs.

The productivity toll is equally concrete. Stripe's 2018 Developer Coefficient study (conducted with Harris Poll across thousands of developers and executives) found developers spend 33% of their working time — 13.5 hours per week — dealing specifically with technical debt, costing companies an estimated $85 billion annually. Academic research from Chalmers University (Besker, Martini, and Bosch, 2018) confirmed the pattern with a longitudinal study: developers waste 23% of development time due to technical debt, a figure that rose to 36% in a larger follow-up survey. The researchers noted developers were unsurprised by the finding; their managers were generally unaware. Gartner's 2025 data shows 93% of development teams report currently experiencing technical debt, with fewer than 20% of application and software engineering leaders rating themselves "very effective" at managing it.

The cost-of-change curve reinforces why POC-origin architecture is so expensive to fix later. Barry Boehm's foundational research (confirmed by NASA, IBM, and NIST studies) established that fixing a requirements-level error in production can cost up to 100× more than fixing it during design for large systems. NIST quantified this practically: fixing one bug in production takes roughly 15 hours versus 5 hours during coding. Gartner predicts that by 2026, 80% of technical debt will be architectural in nature — precisely the kind of debt POC-origin systems create when their ad hoc structures calcify into the foundation of production workloads. Orca Security's 2024 State of Cloud Security Report found 91% of organizations carry vulnerabilities over 10 years old, and 46% harbor ones over 20 years old — quantitative evidence of how "temporary" persists.

The observability black hole where POCs live

POC-origin systems are particularly dangerous because they typically launch without the monitoring, alerting, and capacity planning that would make failures visible before they become catastrophic. The Cortex survey found SLOs and runbooks are among the most challenging production readiness items to enforce, with over 30% of even the most confident engineering leaders struggling with SLO enforcement. Only 28% of respondents mentioned infrastructure provisioning or SLOs as automation priorities.

In the AI domain — where POC-to-production transitions are most actively tracked — 63.4% of enterprises cite lack of monitoring and observability as the top barrier to wider deployment, and 53% expect to significantly rebuild or redesign AI agent systems they've already deployed due to lack of visibility. The GitLab database incident of January 2017 illustrates the failure mode perfectly: five out of five backup and recovery mechanisms had failed or were misconfigured, and nobody knew because restoration had never been tested. A manually triggered snapshot taken 6 hours earlier by an engineer working on an unrelated task was the only thing that prevented total data loss.

Grady Booch named this phenomenon in a 2006 IEEE Software paper: "accidental architecture." He wrote that while every interesting software system has an architecture, "most appear to be accidental" — emerging from accumulated individual decisions rather than intentional design. The concept maps directly to the POC-to-production pattern: each shortcut taken during the exploratory phase becomes a load-bearing architectural decision by default. Object Computing's 2024 analysis identifies the symptoms: "Perpetual First Aid" (constant bug fixes), "Hero Technical Team" (individuals routinely handling risky challenges to sustain normal operations), and "Innovation Gridlock" (new features taking too long and bringing unexpected failures).

Conclusion

The research paints a consistent picture across analyst firms, incident databases, and academic studies. Three facts anchor the narrative. First, the governance gap is structural: 32% of engineering organizations have no post-launch review process, while shadow IT consumes 30–40% of enterprise IT spending largely outside any oversight framework. Second, the financial consequences are concrete and enormous: Knight Capital's $460 million, JP Morgan's $6.2 billion, and the industry-wide $1.52 trillion in accumulated technical debt all trace back to systems that were never designed for the load they eventually carried. Third, the compounding nature of the problem means delay makes it worse: Boehm's 100× cost multiplier, Gartner's prediction that 80% of technical debt will be architectural by 2026, and the finding that developers lose a quarter to a third of their productive time to debt they didn't create — all point to the same conclusion. The most dangerous moment in a system's lifecycle isn't when it fails. It's the quiet moment when someone decides it "works well enough" and moves on to the next sprint.

Commissioned from our research desk. Subject to final editorial discretion.

The dangerous moment when a proof-of-concept gets accidentally promoted to production infrastructure. Cover how POCs built under exploratory constraints—no observability, no SLAs, no capacity planning—quietly become load-bearing systems because they 'worked.' Research stats on shadow IT prevalence and the percentage of production incidents traced to unplanned components. The takeaway is that the transition from experiment to commitment needs an explicit gate, and most organizations don't have one.