Every quarter, I hear the same conversation. A senior leader walks into a room and says, "We need an AI strategy." What follows is a scramble to stand up a proof of concept in eight weeks, built on whatever data happens to be lying around. Three months later, the demo looks impressive in a slide deck. Six months later, nobody mentions it again.

This pattern now shows up clearly in the data.

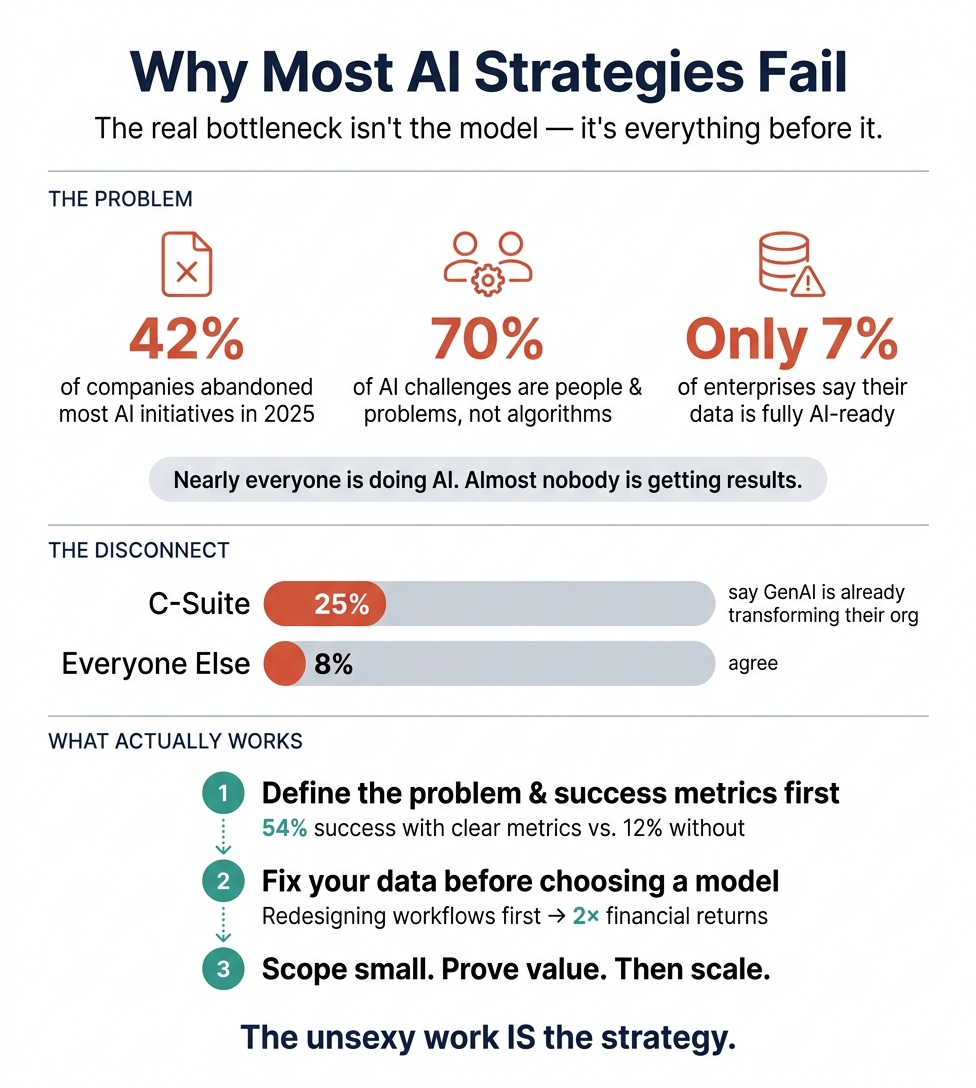

S&P Global found that 42% of companies abandoned the majority of their AI initiatives in 2025, up from 17% the year before. McKinsey, BCG, and MIT have independently converged on the same finding: roughly 5 to 6% of organizations achieve meaningful value from AI at scale. Meanwhile, 88% say they're using AI in at least one function.

Nearly everyone is doing AI. Almost nobody is getting results.

The instinct is to blame the technology. But BCG found that 70% of AI challenges are people and process problems. Only 10% involve the algorithms themselves.

RAND interviewed 65 experienced data scientists about why projects fail. The top cause was misunderstanding the problem to be solved. Teams were building answers to questions nobody had clearly asked.

This matches what I see in practice. The rush to demonstrate "AI capability" means teams skip the essential early work. Nobody defines success in terms a CFO would recognize. Nobody audits whether the data is complete or accurate. Only 7% of enterprises consider their data "completely ready" for AI. And 67% of organizations have been unable to move even half of their GenAI pilots into production.

Deloitte found a telling perception gap. 25% of C-suite respondents said GenAI was "already transforming" their organization. Only 8% of non-C-suite respondents agreed.

The core issue is a mismatch between what's exciting and what's necessary. Model selection is exciting. Spending six months cleaning data pipelines and aligning on what problem you're solving? Not exciting. But that's where the value lives.

Organizations that redesigned workflows before selecting AI models saw twice the financial returns. Projects with clearly defined success metrics had a 54% success rate. Projects without them? 12%.

So what does this mean if you're leading AI efforts?

Start with the problem. Write down, in plain language, what business outcome you're trying to change and how you'll measure it. If you can't do this in two sentences, you're not ready to build anything.

Then look at your data honestly. Can you access what you need? Is it documented, accurate, governed? If the answer is "sort of," that's your first project.

Then scope small. The 5% succeeding at scale didn't start at scale. They started with well defined problems, proved value with real metrics, and expanded deliberately.

The pressure to move fast is real. But the data is unambiguous: rushing past the fundamentals doesn't save time. It wastes it.

The AI readiness gap: data, stats, and frameworks behind the disconnect

The single most striking finding across all major research sources is convergence on a ~5% success rate. McKinsey (2025, n=1,993), [1] BCG (2025, n=1,250), [2] and MIT (2025) independently found that roughly 5–6% of organizations achieve significant, measurable value from AI at scale — despite 88% of organizations now using AI in at least one function. [1] This gap between near-universal adoption and vanishingly rare success defines the central tension between executive AI ambitions and organizational reality. The data consistently shows that infrastructure, governance, and problem definition — not model sophistication — are the primary failure drivers.

1. Enterprise AI project failure rates are high and worsening

The most rigorous recent statistics paint a consistent picture of widespread failure, with rates that appear to be increasing as organizations rush to scale.

RAND Corporation (August 2024) found that more than 80% of AI projects fail — roughly twice the failure rate of non-AI IT projects. [3][4] Based on structured interviews with 65 experienced data scientists and engineers [5][6] (each with 5+ years of experience), RAND identified five root causes: misunderstanding the problem, lack of training data, technology obsession over problem-solving, insufficient infrastructure, and applying AI to problems too difficult for current methods. [7][8] The 80% figure is described as corroborating existing industry estimates rather than a directly measured rate. [9]

BCG's "Where's the Value in AI?" report (October 2024), surveying 1,000 CxOs across 59 countries, found that 74% of companies have yet to show tangible value from AI, with only 4% having cutting-edge capabilities. [10] Their follow-up study in September 2025 (1,250+ firms) showed the situation worsening: 60% generate no material value at all, and only 5% achieve value at scale. [2] BCG's critical insight: 70% of AI challenges are people- and process-related, 20% technology, and only 10% involve AI algorithms. [11][12]

McKinsey's Global Survey on AI (March 2025), with 1,993 participants across 105 countries, [13] found that only 39% of respondents attribute any EBIT impact to AI, [13] and just ~6% qualify as "AI high performers" (5%+ EBIT impact). [1] This was consistent with their 2024 finding (5.3% high performers among 876 companies). [14] An important differentiator: organizations reporting significant returns were twice as likely to have redesigned end-to-end workflows before selecting AI models. [15]

S&P Global Market Intelligence / 451 Research (2025) delivered perhaps the most alarming trend data: 42% of companies abandoned the majority of their AI initiatives in 2025, up from 17% in 2024 [15] — a 2.5x increase in abandonment. The average organization scrapped 46% of AI proof-of-concepts before production. [15] This survey of 1,006 IT and business professionals across North America and Europe [16] has a margin of error of ±3 points at 95% confidence. [17]

Gartner has produced multiple data points: on average, only 48% of AI projects make it into production [3][18] (May 2024 survey, 644 respondents), with 8 months average from prototype to production. [3][19] In July 2024, Gartner predicted at least 30% of generative AI projects would be abandoned after proof of concept by end of 2025 [20] — a figure that appears conservative given S&P Global's actual measured abandonment rate of 42%. [3] By 2025, Gartner updated this to 50%. [21][22] Gartner also predicts over 40% of agentic AI projects will be canceled by end of 2027. [23]

Deloitte's State of Generative AI in the Enterprise (Q3–Q4 2024), surveying 2,770+ director-to-C-suite respondents across 14 countries, [24][25] found that nearly 70% of organizations had moved only 30% or fewer of their GenAI experiments into production. [5][26] A notable perception gap emerged: 25% of C-suite respondents felt GenAI was "already transforming" their organizations, compared to only 8% of non-C-suite respondents. [27]

MIT Project NANDA's "The GenAI Divide" (July 2025) reported that 95% of enterprise GenAI pilot programs fail to generate measurable P&L impact, [28] representing $30–40 billion in estimated collective spending. [29] However, this figure requires important caveats: the study was based on roughly 150 leadership interviews and 350 employee surveys, [30] the authors describe findings as "directionally accurate," [31][32] and the 95% includes organizations that merely investigated AI without necessarily launching pilots. [32] Critics, including Futuriom, have questioned the methodology. [29] Purchased AI solutions succeeded roughly 67% of the time versus internal builds at approximately 22%. [33]

Provenance warning: the "85% failure rate" attributed to Gartner

The widely cited claim that "85% of AI projects fail" traces to [34] a 2018 Gartner prediction [35] that "through 2022, 85% of AI projects will deliver erroneous outcomes due to bias in data, misaligned algorithms, or project team implementation." This was a forward-looking forecast about erroneous outcomes, not a measured failure rate. [36] It has been misquoted and transformed across hundreds of articles into a definitive statistic. [36] Use with extreme caution and correct attribution.

2. Most ML models still never reach production — but the "87%" stat is folklore

The commonly cited claim that "87% of ML models never make it to production" has no identifiable primary research behind it. [37] The provenance trail: an uncited 2017 CIO Dive guest article by James Roberts (then Chief Data Scientist at Quisitive) stated "a mere 13% of data and analytics projects reach completion" — with no source, methodology, or sample size. [38] IBM executive Deborah Leff paraphrased this at VentureBeat Transform 2019, [38] and the resulting VentureBeat sponsored article (July 19, 2019) crystallized it into "87% of data science projects never make it into production." [37] The statistic was then amplified through MLOps vendor marketing into apparent authority. As researcher Mateusz Kwaśniak concluded in a 2023 provenance investigation: the community "spread such unconfirmed piece of information" despite operating close to R&D and academic standards. [37][38]

More rigorous data on model deployment rates includes the following. Gartner (May 2024, 644 respondents) found that 48% of AI projects make it into production, with an average of 8 months from prototype to deployment. [3] This improved slightly from their 2022 finding of 54% [39] but represents a worrying decline. Algorithmia's 2020 State of Enterprise ML (745 respondents) found that only 22% of companies using ML had successfully deployed a model to production. [40] Their 2021 report (403 business leaders at companies with $100M+ revenue) found 64% of organizations take a month or longer to deploy a single trained model, with deployment times actually increasing despite growing budgets. [41] Cleanlab/MIT (August 2025, 1,837 respondents) found only 95 respondents (~5.2%) had AI agents live in production (not pilot/POC). [42]

On MLOps maturity, S&P Global/451 Research (2025) reports only 27% of organizations have invested in MLOps tools [43] (up from 24% year-over-year), with 42% expecting to start within 12 months. [16] Academic literature (Zarour et al., 2025, systematic review of 45 articles) found 70% of organizations are investing in MLOps tools but 55% cite lack of adequate MLOps practices as a major obstacle. [43] The global MLOps market reached $2.19 billion in 2024 and is projected to reach $16.6 billion by 2030 (Grand View Research). [44]

3. Data quality — not algorithms — is the real bottleneck

Every major survey identifies data readiness as the primary blocker for AI deployment. The evidence is overwhelming and consistent across sources.

Only 7% of enterprises say their data is "completely ready" for AI adoption, [45] according to Cloudera and Harvard Business Review Analytic Services (published March 2026, 230+ respondents surveyed October 2025). An additional 27% described their data as "not very" or "not at all" ready, and 73% said their organization should prioritize AI data quality more than it currently does. [45] The Cisco AI Readiness Index (2024) found only 14% of organizations fully ready to integrate AI. [4] Gartner's survey of 1,203 data management leaders (July 2024) found 63% do not have or are unsure if they have the right data management practices for AI. [46] Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data. [20]

The Informatica CDO Insights 2025 Survey (600 CDOs/CDAOs across the U.S., Europe, and Asia, [47] conducted by Wakefield Research) found that data quality, completeness, and readiness was tied as the top obstacle to AI success at 43% [48] (alongside lack of technical maturity), with 35% citing skills shortages. [3] Critically, 67% of organizations have been unable to transition even half of their GenAI pilots to production, [49] and 92% are concerned they are accelerating AI adoption even as they discover underlying problems with data and organizational readiness. [48] The Wavestone/NewVantage Partners 2024 Executive Survey (100+ Fortune 1000 organizations) found that 92.7% of executives identify data as the most significant barrier to AI implementation, [34][18] and only 37% of organizations have been able to improve data quality. [50] Cultural and human factors remain persistent: 78% say people, culture, and process are barriers to becoming data-driven. [50]

How data scientists actually spend their time

The frequently cited claim that "data scientists spend 80% of their time on data preparation" requires qualification. The original source was CrowdFlower's 2016 survey (~80 respondents), which found 60% of time on cleaning/organizing and 19% on collecting data — but only reaches ~80% by combining both categories. [51][52] Subsequent, larger surveys show lower but still substantial figures. Anaconda's 2022 State of Data Science (3,493 respondents from 133 countries) found 37.75% of time on data preparation and cleaning — still the single largest time allocation. [53] Anaconda's 2020 survey (~2,400 respondents) reported ~45% on data preparation. [54] The Kaggle 2018 ML Survey reported approximately 26% on data tasks. [55] A defensible consensus range from multiple sources: data scientists spend 25–45% of their time on data preparation, with the percentage trending downward as tooling improves but remaining the dominant time sink. [56]

Google's seminal paper "Hidden Technical Debt in Machine Learning Systems" (Sculley et al., NeurIPS 2015) — now with over 1,200 citations on Semantic Scholar [57] — established the foundational insight that only a small fraction of real-world ML systems consists of ML code; the vast majority is data collection, verification, feature extraction, configuration, serving infrastructure, and monitoring. [58] This architecture of "technical debt" remains the structural reality behind most AI deployment failures. [59]

4. Executive ambition outpaces organizational reality by a wide margin

The data reveals a stark and measurable gap between C-suite AI urgency and what organizations can actually deliver.

On the pressure side, KPMG's 2025 Global CEO Outlook (1,350 CEOs, companies with $500M+ revenue) found 71% say AI is a top investment priority (up from 64%), with 69% allocating 10–20% of their budget to AI. [60] The Conference Board's 2026 C-Suite Outlook found 43% named AI and technology as their #1 investment priority. [61] Zapier's enterprise survey (September 2025, 532 U.S. C-suite executives) found 81% of companies feel peer pressure from competitors to speed up AI adoption and 92% are treating AI as a priority. [62] McKinsey (2025) found 92% of executives planned to boost AI spending in the next three years. [63][64]

On the reality side, PwC's 29th Global CEO Survey (January 2026, 4,454 CEOs across 95 countries) found 56% of CEOs say they are getting "nothing" from AI — neither increased revenue nor decreased costs. Only 10–12% report benefits on both revenue and cost. [65] The Kyndryl Readiness Report (2025, 3,700 executives) found 61% of CEOs are under increasing pressure to show returns on AI investments compared to a year ago. [66] AWS and Harvard Business Review Analytic Services (623 respondents) found 84% believe AI will transform their business, but only 26% report being "very effective" at leveraging any type of AI. Just 13% believe their data architecture is well-equipped for agentic AI, and only 11% report being "very well-prepared" with adequate governance structures. [67]

A particularly revealing disconnect appears in the Gallup workforce data (Q3 2025, 23,068 U.S. adults) — among the most methodologically rigorous sources given its nationally representative sample. While 44% of employees say their organization has begun integrating AI, only 22% say their organization has communicated a clear plan or strategy, and only 30% say their organization has general guidelines or formal policies for AI at work. This 14-point gap between integration and governance is a structural indicator of organizations deploying AI faster than they can manage it.

On governance specifically, the EY Responsible AI Pulse Survey (March–April 2025, 975 C-suite leaders across 21 countries) found 74% have AI integrated into initiatives, but only one-third have responsible controls for current models. Only 18% of CEOs said their organizations have strong controls for fairness and bias. [68] The Pacific AI 2025 Survey (351 respondents) found that while 75% have AI usage policies, only 36% have adopted a formal governance framework. [69] Stanford's AI Index 2025 reported only 11% have fully implemented fundamental responsible AI capabilities. [70][71]

5. Named frameworks for AI readiness assessment

A substantial ecosystem of named frameworks exists for organizations seeking to assess and improve AI readiness. These span standards bodies, consultancies, academia, and civil society.

Standards body frameworks anchor the landscape. The NIST AI Risk Management Framework (AI RMF 1.0), [72] released January 2023, provides four core functions — Govern, Map, Measure, Manage — with seven trustworthiness characteristics. NIST added a Generative AI Profile (AI-600-1) in July 2024 to address GenAI-specific risks. ISO/IEC 42001:2023 is the world's first certifiable AI management system standard, with 38 specific controls covering the full AI lifecycle using Plan-Do-Check-Act methodology. The OECD AI Principles (adopted May 2019, updated May 2024 with 47 government adherents) provide five value-based principles and remain the primary intergovernmental standard. [73][74] The IEEE 7000-2021 standard establishes processes for addressing ethical concerns during system design, [75][76] complementing IEEE's broader Ethically Aligned Design initiative (developed by 700+ global experts). [77]

Consultancy maturity models provide organizational benchmarking. Gartner's AI Maturity Model assesses [78][79] five levels [78][80] (Awareness through Transformational) [81] across seven pillars: [82] AI Strategy, Use-Case Portfolio, Governance, Engineering, Data, Ecosystems, and People & Culture. [78][79] Gartner's own data shows only 9% of organizations are "AI-mature" (2024). [19] The Deloitte Trustworthy AI Framework aligns with NIST AI RMF's seven trustworthiness characteristics and covers the full AI lifecycle. [83] McKinsey/QuantumBlack's annual State of AI survey tracks adoption and defines "AI high performers" through EBIT impact metrics, though it functions more as benchmarking research than a formal maturity model.

Data management foundations include the EDM Council's DCAM v3 (2025), which assesses eight core components [84] with 38 capabilities and 136 sub-capabilities, [85] scored from 1 (Not Initiated) to 6 (Enhanced) [84] — updated in v3 for AI/ML integration. [86] DAMA-DMBOK 2.0 (2017, with 3.0 in development) provides the vendor-neutral knowledge base across 11 data management areas. [87][88] Neil Lawrence's Data Readiness Levels (2017, [89] arXiv:1705.02245) — modeled after Technology Readiness Levels [89] — offer a three-band assessment [90] (Accessibility → Validity → Utility) for evaluating whether data is fit for a specific AI purpose.

Responsible AI documentation frameworks include Model Cards (Mitchell, Gebru et al., 2019) for standardized ML model documentation, [91] Datasheets for Datasets (Gebru et al., 2018/2021) for dataset documentation and bias assessment, [92] and Google's Data Cards Playbook (2022) for participatory dataset documentation. [93] Canada's Algorithmic Impact Assessment (2019) is the first country-level mandatory framework for automated decision systems, [94] with four impact levels and proportionate requirements. [95] The EU AI Act (2024) introduces Fundamental Rights Impact Assessments for high-risk systems. [96] Microsoft's Responsible AI Standard v2 (June 2022) translates six principles into specific, actionable engineering requirements [97] and is freely available. The World Economic Forum's AI Governance Alliance (launched [98] 2023) includes the Presidio AI Framework for GenAI lifecycle risk assessment and a 2025 framework for agentic AI governance. [99]

6. Problem scoping and success criteria are the most underrated failure drivers

The RAND Corporation's 2024 study identified misunderstanding or miscommunicating the problem to be solved as the single most common root cause of AI project failure. [100][6] This is not an infrastructure or technology issue — it is a strategic and organizational one. RAND's researchers emphasized that "chasing the latest and greatest advances in AI for their own sake is one of the most frequent pathways to failure" and that "successful projects are laser-focused on the problem to be solved, not the technology used to solve it." [4]

Quantitative evidence supports this finding. The Rexer Analytics 2023 Data Science Survey found only 34% of data scientists said objectives are usually well-defined before projects begin. Adobe's 2026 AI and Digital Trends Report (3,000 executives surveyed) found only 44% have implemented a measurement framework for generative AI and only 31% for agentic AI — meaning nearly half of organizations deploying AI have no formal way to measure whether it works. Gartner's 2024 survey found 49% cite difficulty estimating or demonstrating the value of AI projects as the primary adoption obstacle. [19]

McKinsey's 2025 findings reinforce that the differentiator between the 6% of AI high performers and everyone else is not technology but approach: high performers are [13] 3× more likely to report strong senior leadership ownership, [13] 3.6× more likely to pursue transformative change [101] (rather than just efficiency), [102] and — critically — organizations that redesigned workflows before selecting models showed 2× the financial returns. [15][103] Yet only 21% of organizations using GenAI have fundamentally redesigned even some workflows. [104][105]

Aggregated analysis from Pertama Partners (February 2026, drawing on RAND, MIT, McKinsey, Deloitte, and Gartner data plus ~2,400 enterprise initiatives) quantified the scoping problem further: 73% of failed projects lacked clear executive alignment on success metrics, [106] with success criteria typically added retroactively an average of 8 months post-approval. Projects with clear pre-approval metrics showed a 54% success rate versus 12% without. Sustained executive sponsorship yielded a 68% success rate versus 11% without. [106] (Note: Pertama Partners is a consulting firm synthesizing sources; these granular figures may partly derive from their own client engagements rather than purely from the cited primary research.)

Conclusion: converging evidence points to an organizational, not technological, problem

The research converges on several findings that should inform any discussion of enterprise AI deployment. First, the ~5% success-at-scale rate is the most robust cross-validated finding — emerging independently from McKinsey (n=1,993), [1] BCG (n=1,250), [2] and MIT across different methodologies and populations. Second, failure rates are increasing, not decreasing, as the S&P Global data showing abandonment jumping from 17% to 42% year-over-year [15] demonstrates. [17][15] Third, the bottleneck is overwhelmingly organizational: BCG's 10/20/70 finding (10% algorithms, 20% technology, 70% people and processes) [11] and RAND's identification of problem misunderstanding as the top failure cause [100][6] both point away from technical solutions. Fourth, a comprehensive ecosystem of readiness frameworks exists — from NIST AI RMF to ISO 42001 [107] to Data Readiness Levels — but adoption remains low, with only 36% of organizations having a formal governance framework [69] and 11% having fully implemented responsible AI capabilities. [71] The gap is not in available tools or knowledge; it is in the organizational discipline to use them before, rather than after, deploying AI at scale. [108]

- Lighthouse AI — https://lighthouselaunch.com/blog/mckinsey-state-of-ai-2025-report-analysis

- Bcg — https://media-publications.bcg.com/The-Widening-AI-Value-Gap-October-2025.pdf

- Informatica — https://www.informatica.com/blogs/the-surprising-reason-most-ai-projects-fail-and-how-to-avoid-it-at-your-enterprise.html

- RAND — https://www.rand.org/content/dam/rand/pubs/research_reports/RRA2600/RRA2680-1/RAND_RRA2680-1.pdf

- Medium — https://medium.com/@archie.kandala/the-production-ai-reality-check-why-80-of-ai-projects-fail-to-reach-production-849daa80b0f3

- Salesforcedevops — https://salesforcedevops.net/index.php/2024/08/19/ai-apocalypse/

- InsideAI News — https://insideainews.com/2024/08/14/new-rand-research-why-do-ai-projects-fail/

- Asia Financial — https://www.asiafinancial.com/study-suggests-ways-to-overcome-high-failure-rate-in-ai-projects

- Quicklaunchanalytics — https://quicklaunchanalytics.com/bi-blog/why-80-of-ai-projects-fail-before-they-start-its-your-data-foundation/

- PR Newswire +4 — https://www.prnewswire.com/news-releases/ai-adoption-in-2024-74-of-companies-struggle-to-achieve-and-scale-value-302285294.html

- MediaBrief — https://mediabrief.com/bcg-ai-adoption-in-2024/

- Boston Consulting Group — https://www.bcg.com/press/24october2024-ai-adoption-in-2024-74-of-companies-struggle-to-achieve-and-scale-value

- McKinsey & Company — https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

- McKinsey & Company — https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-2024

- WorkOS +3 — https://workos.com/blog/why-most-enterprise-ai-projects-fail-patterns-that-work

- S&P Global — https://www.spglobal.com/market-intelligence/en/news-insights/research/ai-experiences-rapid-adoption-but-with-mixed-outcomes-highlights-from-vote-ai-machine-learning

- S&P Global — https://www.spglobal.com/market-intelligence/en/news-insights/research/2025/10/generative-ai-shows-rapid-growth-but-yields-mixed-results

- Talyx AI — https://www.talyx.ai/insights/enterprise-ai-implementation-failure

- Gartner — https://www.gartner.com/en/newsroom/press-releases/2024-05-07-gartner-survey-finds-generative-ai-is-now-the-most-frequently-deployed-ai-solution-in-organizations

- Astrafy +3 — https://astrafy.io/the-hub/blog/technical/scaling-ai-from-pilot-purgatory-why-only-33-reach-production-and-how-to-beat-the-odds

- Gartner — https://www.gartner.com/en/articles/genai-project-failure

- Medium — https://medium.com/@stahl950/the-ai-implementation-paradox-why-42-of-enterprise-projects-fail-despite-record-adoption-107a62c6784a

- Gartner +2 — https://www.gartner.com/en/newsroom/press-releases/2025-06-25-gartner-predicts-over-40-percent-of-agentic-ai-projects-will-be-canceled-by-end-of-2027

- Deloitte — https://www.deloitte.com/us/en/about/press-room/state-of-generative-ai-Q3.html

- m&k — https://www.markt-kom.com/wp-content/uploads/2025/01/Download-Deloitte-Report-State-of-Generative-AI-Englisch.pdf

- Deloitte Insights — https://www.deloitte.com/us/en/insights/topics/digital-transformation/generative-ai-and-the-future-enterprise.html

- Psi — https://www.psi.de/fileadmin/downloads/de/loesungen/anwendungsfaelle/LOG/Deloitte-Bericht-State-of-Generative-AI.pdf

- Nationalcioreview +2 — https://nationalcioreview.com/articles-insights/extra-bytes/mit-finds-genai-projects-fail-roi-in-95-of-companies/

- CoLab +2 — https://www.colabsoftware.com/post/mit-nanda-report-engineers-adopt-ai

- Fortune — https://fortune.com/2025/08/18/mit-report-95-percent-generative-ai-pilots-at-companies-failing-cfo/

- DX — https://getdx.com/blog/the-ai-divide/

- Sean Goedecke — https://www.seangoedecke.com/why-do-ai-enterprise-projects-fail/

- Yahoo Finance +2 — https://finance.yahoo.com/news/mit-report-95-generative-ai-105412686.html

- Dynatrace — https://www.dynatrace.com/news/blog/why-ai-projects-fail/

- EdTech Digest — https://www.edtechdigest.com/2025/12/05/from-pilot-to-scale-why-most-ai-projects-fail-to-move-the-needle/

- ProjectManagement.com — https://www.projectmanagement.com/blog-post/79154/why-do-ai-projects-fail-

- Medium — https://mtszkw.medium.com/why-do-87-of-data-science-projects-fail-and-are-we-sure-that-it-is-true-fe8b5ba1404c

- Medium — https://user5724987.medium.com/why-do-87-of-data-science-projects-fail-and-are-we-sure-that-it-is-true-fe8b5ba1404c

- Gartner — https://www.gartner.com/en/newsroom/press-releases/2022-08-22-gartner-survey-reveals-80-percent-of-executives-think-automation-can-be-applied-to-any-business-decision

- ML Ops — https://ml-ops.org/content/motivation

- Scribd — https://www.scribd.com/document/495698473/Algorithmia-2021-Enterprise-ML-trends

- Cleanlab — https://cleanlab.ai/ai-agents-in-production-2025/

- ScienceDirect — https://www.sciencedirect.com/science/article/abs/pii/S0950584925000722

- Grand View Research — https://www.grandviewresearch.com/industry-analysis/mlops-market-report

- Cloudera — https://www.cloudera.com/about/news-and-blogs/press-releases/2026-03-05-only-7-percent-of-enterprises-say-their-data-is-completely-ready-for-ai-according-to-new-report-from-cloudera-and-harvard-business-review-analytic-services-reveals.html

- Gartner — https://www.gartner.com/en/newsroom/press-releases/2025-02-26-lack-of-ai-ready-data-puts-ai-projects-at-risk

- CDO Magazine — https://www.cdomagazine.tech/branded-content/watch-now-cdo-insights-2025-racing-ahead-on-genai-and-data-investments-despite-potential-speed-bumps

- CDO Magazine — https://www.cdomagazine.tech/branded-content/delivering-on-the-promise-of-business-value-insights-from-600-cdos-on-making-ai-pilots-production-ready-in-2025

- Informatica — https://www.informatica.com/about-us/news/news-releases/2025/01/20250128-global-data-leaders-seek-to-harness-the-power-of-genai-for-ai-driven-success.html

- Squarespace — https://static1.squarespace.com/static/62adf3ca029a6808a6c5be30/t/66635b1a6aeebf254874237b/1717787418584/DataAI-ExecutiveLeadershipSurveyFinalAsset.pdf

- Enterprise Knowledge — https://enterprise-knowledge.com/the-value-of-data-catalogs-for-data-scientists/

- Timextender — https://blog.timextender.com/reversing-the-80-20-rule-in-data-wrangling

- Predictiveanalyticsworld — https://www.predictiveanalyticsworld.com/machinelearningtimes/state-of-data-science-2022/12789/

- HPCwire — https://www.hpcwire.com/bigdatawire/2020/07/06/data-prep-still-dominates-data-scientists-time-survey-finds/

- Lost Boy — https://blog.ldodds.com/2020/01/31/do-data-scientists-spend-80-of-their-time-cleaning-data-turns-out-no/

- University of Maryland — https://lib.guides.umd.edu/datavisualization/clean

- semanticscholar — https://www.semanticscholar.org/paper/Hidden-Technical-Debt-in-Machine-Learning-Systems-Sculley-Holt/1eb131a34fbb508a9dd8b646950c65901d6f1a5b

- ResearchGate — https://www.researchgate.net/publication/319769912_Hidden_Technical_Debt_in_Machine_Learning_Systems

- NeurIPS — https://papers.neurips.cc/paper/5656-hidden-technical-debt-in-machine-learning-systems.pdf

- KPMG — https://kpmg.com/xx/en/our-insights/value-creation/global-ceo-outlook-survey.html

- Conference Board — https://www.conference-board.org/research/ced-policy-backgrounders/ai-and-the-c-suite-implications-for-ceo-strategy-in-2026

- Zapier — https://zapier.com/blog/ai-resistance-survey/

- FullStack — https://www.fullstack.com/labs/resources/blog/generative-ai-roi-why-80-of-companies-see-no-results

- Fullview — https://www.fullview.io/blog/ai-statistics

- Fortune — https://fortune.com/2026/01/19/pwc-global-chairman-mohamed-kande-ai-nothing-basics-29th-ceo-survey-davos-world-economic-forum/

- Medium — https://skooloflife.medium.com/how-the-ai-industry-created-644-billion-of-economic-vandalism-in-2025-1ca0d71ab6f2

- AWS — https://aws.amazon.com/blogs/enterprise-strategy/agentic-ai-bridging-the-widening-gap-between-ambition-and-execution/

- EY — https://www.ey.com/en_ro/newsroom/2025/08/ey-survey-ai-adoption-outpaces-governance-as-risk-awareness

- Pacific AI — https://pacific.ai/2025-ai-governance-survey/

- Stanford — https://hai.stanford.edu/ai-index/2025-ai-index-report

- Virtasant — https://www.virtasant.com/ai-today/3-ai-governance-framework-questions-keeping-leaders-awake

- Nist — https://airc.nist.gov/airmf-resources/airmf/

- OECD — https://www.oecd.org/en/topics/ai-principles.html

- American National Standards Institute — https://www.ansi.org/standards-news/all-news/5-9-24-oecd-updates-ai-principles

- Rai-toolkit — https://rai-toolkit.github.io/governance/standard/IEEE-7000-2021-IEEE-Standard-Model-Proc/

- create digital — https://createdigital.org.au/engineering-ethics-standards-software/

- Ethics In Action — https://ethicsinaction.ieee.org/

- Gartner — https://www.gartner.com/en/newsroom/press-releases/2025-06-30-gartner-survey-finds-forty-five-percent-of-organizations-with-high-artificial-intelligence-maturity-keep-artificial-intelligence-projects-operational-for-at-least-three-years

- Gartner — https://www.gartner.com/en/documents/5937907

- Medium — https://medium.com/@mohsen.semsarpour/gartner-ai-maturity-model-2c01fab629b6

- United States Artificial Intelligence Institute — https://www.usaii.org/ai-insights/understanding-ai-maturity-levels-a-roadmap-for-strategic-ai-adoption

- Gartner — https://www.gartner.com/en/chief-information-officer/research/ai-maturity-model-toolkit

- Deloitte — https://www.deloitte.com/us/en/what-we-do/capabilities/applied-artificial-intelligence/articles/ai-risk-management.html

- Capco — https://www.capco.com/intelligence/capco-intelligence/the-dcam-framework

- Dgpo — https://dgpo.org/wp-content/uploads/2016/06/EDMC_DCAM_-_WORKING_DRAFT_VERSION_0.7.pdf

- EDM Council — https://edmcouncil.org/announcement/announcing-dcam-v3-meet-the-new-standard-for-your-data/

- DAMA International® — https://dama.org/learning-resources/dama-data-management-body-of-knowledge-dmbok/

- Ovaledge — https://www.ovaledge.com/blog/dama-dmbok-data-governance-framework

- Readthedocs — https://nlp-data-readiness.readthedocs.io/en/latest/data-readiness-levels.html

- arXiv — https://arxiv.org/html/2404.05779v1

- ACM Digital Library — https://dl.acm.org/doi/10.1145/3287560.3287596

- arXiv — https://arxiv.org/html/2405.06258v1

- Google Research — https://research.google/blog/the-data-cards-playbook-a-toolkit-for-transparency-in-dataset-documentation/

- IAPP — https://iapp.org/resources/article/global-ai-governance-canada

- Canada.ca — https://www.canada.ca/en/government/system/digital-government/digital-government-innovations/responsible-use-ai/algorithmic-impact-assessment.html

- Verifywise — https://verifywise.ai/lexicon/ai-impact-assessment

- Verifywise — https://verifywise.ai/ai-governance-library/governance-frameworks/microsoft-responsible-ai-standard

- World Economic Forum — https://www.weforum.org/stories/2024/11/balancing-innovation-and-governance-in-the-age-of-ai/

- World Economic Forum — https://www.weforum.org/publications/ai-agents-in-action-foundations-for-evaluation-and-governance/

- RAND — https://www.rand.org/pubs/research_reports/RRA2680-1.html

- CoLab — https://www.colabsoftware.com/post/mckinseys-state-of-ai-2025-what-separates-high-performers-from-the-rest

- Libertify — https://www.libertify.com/interactive-library/state-of-ai-2025-mckinsey-report/

- Quest Blog — https://blog.quest.com/the-hidden-ai-tax-why-theres-an-80-ai-project-failure-rate/

- Mission Control — https://www.missioncloud.com/blog/ai-statistics-2025-key-market-data-and-trends

- McKinsey & Company — https://www.mckinsey.com/~/media/mckinsey/business%20functions/quantumblack/our%20insights/the%20state%20of%20ai/2025/the-state-of-ai-how-organizations-are-rewiring-to-capture-value_final.pdf

- Pertama Partners — https://www.pertamapartners.com/insights/ai-project-failure-statistics-2026

- DNV — https://www.dnv.com/services/iso-iec-42001-artificial-intelligence-ai--250876/

- WebProNews — https://www.webpronews.com/the-ai-deployment-crisis-hiding-in-plain-sight-why-most-companies-are-stuck-between-ambition-and-execution/

Commissioned from our research desk. Subject to final editorial discretion.