Every few months, a vendor walks into a strategy review with a polished maturity model. Slick PDF. Five levels. A spider chart showing you're at Level 2. And a clear path to Level 5 that just happens to require buying more of their products.

The maturity model is the sales pitch.

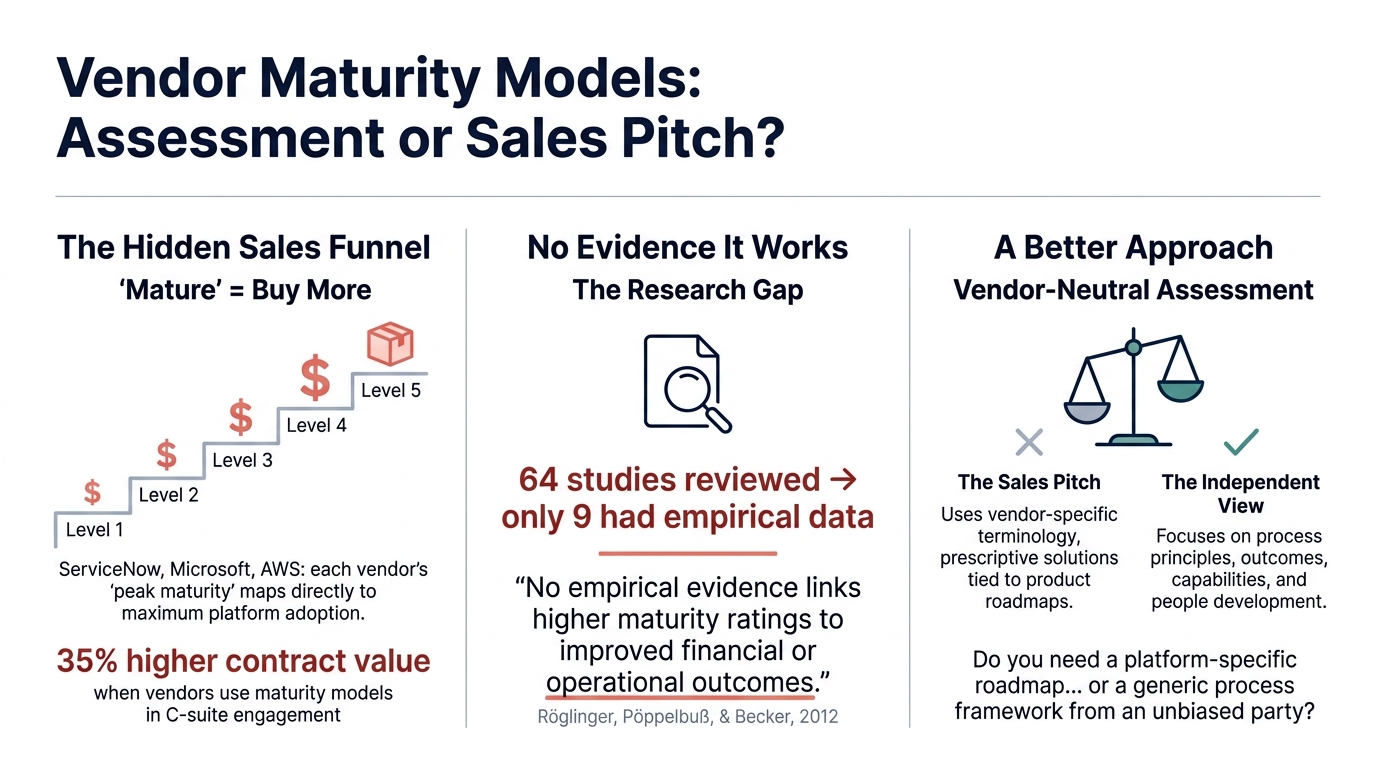

Carilu Dietrich, former CMO of Atlassian, published a piece in 2025 calling maturity frameworks "a hidden gem in your GTM strategy." She describes account teams using them to create urgency: "You're at Level 2, but your competitors are at Level 3." Companies using maturity models for C-suite engagement saw 35% higher average contract value and 2x expansion likelihood. The quiet part, said loud.

ServiceNow's ITOM maturity model maps each stage to a specific paid module. Microsoft's M365 model claims to be "led by business needs," then defines peak maturity as 95%+ platform usage across its workloads. AWS gates its Cloud Maturity Assessment behind your account team. "Mature" means "using more of our stuff."

Do the models actually work? Thordsen and Bick's 2023 systematic review analyzed 64 digital maturity model publications spanning a decade. Only 9 offered empirical data linking maturity scores to performance. Six of those came from consultancies selling the models. Gartner's own data shows less than 5% of organizations reach "optimized" maturity in data governance.

James Bach called this "level envy" in 1994. Organizations displace their true mission of improvement with the artificial mission of achieving a higher score. He pointed out that Microsoft, Apple, and Symantec would all have scored Level 1 on the Capability Maturity Model. "The most powerful argument against the CMM is the many successful companies that, according to the CMM, should not exist."

Dr. Nicole Forsgren and the DORA research team were unequivocal: maturity models are not the appropriate tool or mindset. They imply a finish line. They're linear and one-size-fits-all. They measure tooling install base without tying it to outcomes.

Capital One's Drew Firment rejected vendor models entirely and built the "Cloudometer," a custom system measuring speed, quality, and cost against Capital One's own objectives. Teams set their own targets. The framework adapted as they learned. Dave Ulrich's research in HBR found that well-managed companies tend to excel in only about three capabilities while maintaining parity in others. A generic model pushing advancement across every dimension actively misallocates your attention.

Building an internal capability model is genuinely difficult. You have to define what "good" looks like for your organization instead of outsourcing that judgment.

The next time someone presents a maturity model with your organization at Level 2, ask three questions: Who funded the model? What products map to Level 5? Has anyone validated that reaching Level 5 produces measurable outcomes?

The silence will tell you everything.

The vast majority of vendor-sponsored maturity models function as disguised product adoption roadmaps, not genuine strategic assessments. This finding is supported by a striking convergence of evidence: insider admissions from vendor marketing leaders, a decade of academic research showing almost no empirical link between maturity scores and business performance, and foundational critiques from thought leaders spanning 30 years. The pattern is consistent across Microsoft, AWS, Google Cloud, Salesforce, ServiceNow, and Gartner — each publishes models where "mature" functionally means "using more of our products." Meanwhile, organizations spend billions climbing maturity ladders that 70–88% of the time fail to deliver intended business outcomes.

The quiet part said loud: vendors openly weaponize maturity models for sales

The most damning evidence comes not from critics, but from vendor-side insiders who openly describe maturity models as go-to-market weapons.

Carilu Dietrich, former CMO of Atlassian (she led their IPO marketing), published a 2025 article titled "Maturity Frameworks: A Hidden Gem in Your GTM Strategy." She writes without irony: "A maturity model becomes a strategic asset when embedded into your go-to-market efforts," [1] noting benefits including "Bigger Deals — Mapping a path to strategic value unlocks cross-sell and upsell" and "Faster Sales Cycles — Executive buy-in accelerates decisions." [1] The stated goal: "It positions your company as an advisor, not a vendor" [1] — the entire purpose is to disguise selling as advising. She cites data showing companies using maturity models for C-suite engagement see 35% higher average contract value (McKinsey, 2023), 30% shorter sales cycles (Forrester, 2024), and 2× higher likelihood of expanding within the account (Gartner, 2024). [1]

Dietrich's Marketo case study is the smoking gun. Account teams used the maturity model in Quarterly Business Reviews to create urgency: "You're at Level 2, but your competitors are at Level 3 — here's how we can help you get there." [1] At BEA Systems, an online maturity self-assessment generated leads with an offer for a "consultative advisory session" from professional services — pulling the company "out of technical conversations and into strategic ones. It brought financial buyers to the table."

Alyssa Meritt (former strategist at Airship, Contentful, and Lytics) is equally explicit: "Marketing teams can use maturity models as a lead generation tool. Sales teams can use maturity models to help SaaS prospects articulate their current and desired state. Within Customer Success, maturity models are a mechanism to align around a shared goal… often corresponding with the use of more advanced features or a new product offering." At Airship, a Mobile Maturity Model behind a lead-gen form "provided a consistent source of leads for several months after its launch." [2] At Contentful, the model was "used externally with prospects to better understand their business goals and highlight Contentful's product." [2]

Even the infrastructure layer is revealing. Pointerpro, a SaaS platform, markets itself explicitly as a tool to help vendors build maturity assessments as automated lead generation: "A landing page and assessment offers consultants a way to effectively automate their lead generation process." [3]

Each maturity level maps to a product SKU — the evidence across six vendors

The pattern repeats with mechanical precision across the industry's largest vendors. Each defines "mature" as "deeper adoption of our specific product suite."

ServiceNow's ITOM Maturity Model is the most blatant example. Its four stages each map directly to a specific paid module: Stage 1 (Consolidation) requires ServiceNow Discovery; Stage 2 (Service Aware) requires Service Mapping; Stage 3 (Data Aware) requires Event Management; Stage 4 (AI Driven) requires ServiceNow's ML/AI capabilities. [4][4] The maturity model literally is a product adoption roadmap — each level equals buying the next module.

Microsoft's M365 Maturity Model claims to be "non-partisan" and "led by business needs rather than technology features." [5] Yet the practical implementation documents reveal otherwise: organizations "must reach Level 300 in Business Process, Staff & Training, and Management of Content to enable successful Copilot adoption." [6][7] The highest maturity level (Level 500, "Optimizing") for Information Management describes organizations "using the core Microsoft 365 workloads" with "usage of >95%" [8] across external sharing, Teams, and Power Platform.

AWS's Cloud Maturity Assessment is a 77-question evaluation [9] described as "a no-cost assessment available through your AWS account team" [9] — you must engage AWS sales to receive it. The Cloud Transformation Maturity Model's highest stage (Optimization) is fundamentally about deeper AWS adoption. [10] AWS's own whitepaper states: "AWS and its thousands of partners have leveraged this model to accelerate customer adoption of AWS Cloud services." [11]

Google Cloud's Adoption Framework gates its maturity assessment through sales: "If you'd like to ensure success, you might consider engaging Google Cloud… Working with a Technical Account Manager (TAM), you can perform a high-level assessment." [12] Its highest maturity phase describes use of Google-specific tools like "Stackdriver" and "Forseti Security." [12]

Salesforce's 2025 Agentic Maturity Model nudges organizations toward platform consolidation from Level 1: "Ensure data quality and availability by starting with a harmonized data source (e.g., one CRM Org)." [13] Higher levels require deeper integration with Salesforce's Agentforce platform. Gartner's ITScore family gates assessments behind subscriptions, generating roadmaps that link to Gartner's own advisory services [14] — a self-reinforcing advisory loop.

| Vendor | Maturity Model | What "Mature" Requires |

|---|

| ServiceNow | ITOM Maturity | Each level = next paid module (Discovery → Service Mapping → Event Mgmt → AI/ML) |

| Microsoft | M365/Copilot | Level 300+ across competencies + Copilot licenses, 95%+ platform usage |

| AWS | Cloud Transformation | Organization-wide AWS adoption; assessment requires engaging sales team |

| Google Cloud | Cloud Adoption | TAM engagement + GCP-native tools (Stackdriver, Forseti) |

| Salesforce | Agentic Maturity | Consolidated CRM Org + Agentforce + Data Cloud |

| Gartner | ITScore | Subscription-gated; roadmaps link to Gartner advisory services |

Climbing the ladder to nowhere: maturity scores don't predict business outcomes

The most authoritative academic study on this question is Thordsen and Bick's 2023 systematic review in Information Systems and e-Business Management, which analyzed 64 digital maturity model publications from 2011–2022. Their findings are devastating for maturity model proponents:

Only 9 of 64 publications offered any empirical data linking maturity to firm performance. [15] Of those 9, 6 came from consultancy backgrounds — the very organizations selling these models — presenting "the most extreme and 'promising' empirical evidence." [15] The authors conclude: "The often proclaimed statement 'the more digital, the better' is according to these experts nothing more than a presumption." [15] Scholars "univocally agree that there is a clear gap of knowledge" about whether maturity models add value compared to traditional improvement approaches. [15]

The broader transformation data tells a consistent story of failure. BCG's 2020–2021 research across 850+ companies found only 35% of digital transformations achieved their objectives. [16] Their 2024 agile-specific study uncovered the "illusory agile" phenomenon: companies claiming high agile maturity overestimate their results at nearly 2:1 compared to companies that actually achieve outcomes. Similar percentages of truly agile (91%) and illusory-agile (87%) companies perceive themselves as successful, but by a rate of almost two to one, genuinely agile companies actually realize their goals. [17] McKinsey consistently reports a 70% transformation failure rate, [16] representing roughly $900 billion wasted out of $1.3 trillion invested in a single year. [18] Bain's 2024 estimate is even worse: 88% of business transformations fail to achieve original ambitions. [19]

Gartner's own data undermines the value of its models. Its widely cited 2019 prediction stated: "Through 2022, only 20% of analytic insights will deliver business outcomes." [20][21] Analysis of the Gartner Analytics Maturity Model found that only 13% of organizations claim to have achieved the model's vision, with value actually turning negative at higher difficulty levels. [22] In data governance, Gartner's own surveys show less than 5% of organizations reach "optimized" maturity, while most cluster at levels 2–3. [23] A Forrester study found executives are "especially prone to overestimating their progress when compared to those who are actually doing the work" [24] — 65% believed themselves "advanced" while less than 12% actually were. [25]

Three decades of critiques the industry keeps ignoring

The critique of maturity models isn't new — it spans 30 years of increasingly pointed analysis from some of technology's most respected thinkers.

James Bach fired the opening salvo in 1994 with "The Immaturity of CMM," [26] noting that the original Capability Maturity Model "has no formal theoretical basis" and is built on "very knowledgeable people's experience, not empirical research." [26] He coined the term "level envy" — when organizations displace their true mission (improvement) with the artificial mission of achieving a higher maturity level. [26] His most potent observation: the most innovative companies of the era (Microsoft, Apple, Borland, Symantec) would all score Level 1 on the CMM because they didn't formally manage requirements documents. [26] "The most powerful argument against the CMM as an effective prescription for software processes is the many successful companies that, according to the CMM, should not exist." [26] In a 1999 postscript, he added: "It gives hope, and an illusion of control, to management… this step-by-step plan usually becomes a substitute for genuine education in engineering management." [26]

Martin Fowler (Thoughtworks Chief Scientist) wrote in 2014: "It feels too easy to see maturity models as catnip for consultants looking to sell performance improvement efforts." [27] He observed that CMM certification became "a whole world of often-bogus certification levels" and that "using a maturity model to say one group is better than another is a classic example of ruining an informational metric by incentivizing it." [27] His colleague Jason Yip identified a core structural flaw: "The maturity model conflates level of effectiveness with learning path." [27]

Barry O'Reilly (author of Unlearn and Lean Enterprise) delivered perhaps the most quotable line in 2019: "The vast majority of maturity models are sales tools created to market a consistent buyer's path, rather than a dynamic, context-specific, or customized outcome that a company wishes to achieve." [28][28] He traces the origin story: the CMM was literally created as a supply chain management technique for the US Department of Defense to evaluate vendors [29] — a tool designed to assess someone else's capabilities, never intended for self-improvement. [28]

Christiaan Verwijs offered a memorable analogy: "Maturity models are the best friend of consultants… Just like with snack food, what looks appealing at first glance doesn't actually offer anything of substance on closer inspection. And it's bad for you." [30] Ben Morris (VP of Architecture at Ideagen) reinforced: "In the absence of any credible, published design process, a maturity model can feel like nothing more than marketing tools for consultants." [31][31]

The conceptual critiques cluster around five recurring problems: the false assumption of linear progression [30] (real improvement involves obstacles, tangents, and context-dependent sequencing); [31] the conflation of capability with maturity (Yip/Fowler); [32] the one-size-fits-all fallacy (what works for a 30-person company differs entirely from a multinational, [33] as Adrian Howard notes); [33] the checkbox/gaming problem (organizations chase levels instead of genuine improvement); [34][35] and process compliance over outcomes (models measure whether activities happen, not whether they produce results). [36][31]

The harder path that actually works: capability models tied to outcomes

The most empirically rigorous alternative comes from Dr. Nicole Forsgren, Jez Humble, and Gene Kim in Accelerate (2018), [33] based on four years of DORA research across hundreds of organizations. [24] Their conclusion is unequivocal: "We cannot stress enough that maturity models are not the appropriate tool to use or mindset to have." [24]

Forsgren identifies four evidence-based reasons capability models are superior. First, maturity models imply a finish line [24] — "the most innovative companies and highest-performing organizations are always striving to be better and never consider themselves 'mature' or 'done.'" [24] Second, maturity models are linear and one-size-fits-all, while "capability models are multidimensional and dynamic," allowing different parts of the organization to take customized approaches. [37] Third, capability models are outcome-based: "Most maturity models simply measure the technical proficiency or tooling install base in an organization without tying it to outcomes. These end up being vanity metrics." [37][24] Fourth, maturity models are static in a world where what constitutes high performance changes yearly. [38][31]

On vendor bias specifically, Forsgren states: "Product vendors favor capabilities that align with their product offerings. Consultants favor capabilities that align with their background, their offering, and their home-grown assessment tool." [39]

The Capital One case study illustrates what the alternative looks like in practice. Drew Firment (former Technology Director of Cloud Engineering) rejected vendor cloud maturity models [28] and built the "Cloudometer" — a custom system measuring metrics specific to Capital One's objectives: speed, quality, and cost of cloud migrations. Teams set their own outcomes relative to organizational objectives, progress was visible across the entire department, and the framework adapted as they learned. [28] As O'Reilly notes: "A maturity model would never have given that real-time insight and accuracy of information." [28]

Even NIST explicitly rejects the maturity model framing for its Cybersecurity Framework. NIST CSF 2.0 (February 2024) states that its Implementation Tiers are "not designed to be a maturity model." [40] Instead, it uses Organizational Profiles — current and target states customized to each organization's context, risk appetite, and business strategy. [41] This is essentially a capability-based approach from the organization that arguably has the strongest technical credibility in the space.

The Maturity Mapping movement, led by Chris McDermott and Marc Burgauer and building on Simon Wardley's mapping technique, offers another alternative. Their core insight: "What we often experience when applying maturity models is that we don't really do what it says because in our circumstances this won't work… but now the model says we are immature." [32] Their approach creates context-specific assessments incorporating Social Practice Theory, the Cynefin framework, and Wardley Mapping. [42][43]

Dave Ulrich and Norm Smallwood's HBR framework ("Capitalizing on Capabilities," 2004) reinforces the point from the management strategy side: well-managed companies tend to excel in only three capabilities while maintaining industry parity in others [44] — meaning a generic maturity model pushing advancement across all dimensions actively misallocates attention.

Conclusion: what this means for leaders evaluating maturity models

The evidence reveals a structural conflict of interest so consistent it qualifies as an industry pattern, not an aberration. When a vendor hands you a maturity assessment, you are receiving a document engineered to make you feel strategically inadequate in precisely the areas where that vendor sells solutions. The former CMO of Atlassian says so explicitly. The data confirms that climbing these ladders rarely produces the promised outcomes — only 9 of 64 academic studies found any empirical link between maturity and performance, and most of those came from the consultancies selling the models. [15]

The alternative — building internal capability models tied to your specific business outcomes — is harder because it requires leaders to define what "good" looks like for their organization rather than outsourcing that judgment to a vendor's framework. But the DORA research, the Capital One case, and NIST's own pivot away from maturity framing all point in the same direction: [45][46] context-specific, outcome-linked capability assessment dramatically outperforms generic maturity ladders. The strongest single takeaway for a LinkedIn audience: the next time someone presents a maturity model with your organization scored at Level 2, ask who funded the model, what products map to Level 5, and whether anyone has empirically validated that reaching Level 5 produces measurable business outcomes. The silence will be informative.

- Carilu — https://www.carilu.com/p/maturity-frameworks-a-hidden-gem

- Meritt Strategy — https://www.merittstrategy.com/resources/building-a-maturity-model

- Pointerpro — https://pointerpro.com/blog/maturity-model-to-generate-leads/

- einar — https://research.einar.partners/maturity-model/

- Microsoft Learn — https://learn.microsoft.com/en-us/microsoft-365/community/microsoft365-maturity-model--intro

- Microsoft Learn — https://learn.microsoft.com/en-us/microsoft-365/community/maturity-model-microsoft365-practical-scenarios-copilot-implementation

- Microsoft Learn — https://learn.microsoft.com/en-us/microsoft-365/community/microsoft365-maturity-model--governance-and-compliance

- Insentra Group — https://www.insentragroup.com/us/insights/geek-speak/modern-workplace/what-is-the-microsoft-365-maturity-model/

- AWS — https://aws.amazon.com/blogs/publicsector/operationalizing-cloud-adoption-with-the-aws-cloud-maturity-assessment/

- AWS — https://aws.amazon.com/blogs/publicsector/cloud-adoption-maturity-model-guidelines-to-develop-effective-strategies-for-your-cloud-adoption-journey/

- AWSstatic — https://d1.awsstatic.com/whitepapers/AWS-Cloud-Transformation-Maturity-Model.pdf

- Google — https://services.google.com/fh/files/misc/google_cloud_adoption_framework_whitepaper.pdf

- Salesforce — https://www.salesforce.com/news/stories/agentic-maturity-model/

- Gartner — https://www.gartner.com/en/research/methodologies/gartner-score-diagnostic-family

- Springer — https://link.springer.com/article/10.1007/s10257-023-00656-w

- Integrate.io +2 — https://www.integrate.io/blog/data-transformation-challenge-statistics/

- Bcg — https://web-assets.bcg.com/pdf-src/prod-live/why-companies-get-agile-right-wrong.pdf

- Medium — https://medium.com/@tomlinsonroland/digitally-transformed-and-still-broken-ee0b374a160a

- MeltingSpot Blog — https://blog.meltingspot.io/why-digital-transformation-projects-fail/

- CIO — https://www.cio.com/article/193943/transforming-analytics-into-business-impact.html

- MIT Sloan — https://mitsloan.mit.edu/ideas-made-to-matter/10-best-practices-analytics-success-including-3-you-cant-ignore

- LinkedIn — https://www.linkedin.com/pulse/why-only-13-achieved-gartner-analytics-maturity-model-denis-sproten

- Atlan — https://atlan.com/know/gartner/data-governance-maturity-model/

- TechTarget — https://www.techtarget.com/searchitoperations/opinion/DevOps-Research-and-Assessment-team-dispels-maturity-model-myths

- SupplyChainBrain — https://www.supplychainbrain.com/blogs/1-think-tank/post/29842-companies-are-wildly-overestimating-their-digital-maturity-in-supplier-management

- satisfice — https://www.satisfice.com/blog/archives/6208

- martinfowler — https://martinfowler.com/bliki/MaturityModel.html

- Barry O'Reilly — https://barryoreilly.com/explore/blog/why-maturity-models-dont-work/

- Wikipedia — https://en.wikipedia.org/wiki/Capability_Maturity_Model

- Medium — https://medium.com/the-liberators/whats-wrong-with-maturity-models-abfb6dd97607

- ben-morris — https://www.ben-morris.com/the-case-against-maturity-models/

- Thinkinglabs — https://thinkinglabs.io/notes/2022/02/10/flowcon-maturity-mapping-or-how-to-tailor-capability-adoption-to-your-existing-culture-marc-burgauer.html

- Container Solutions — https://blog.container-solutions.com/in-defense-of-maturity-models

- Agility at Scale — https://agility-at-scale.com/safe/safe-principles/safe-principles-maturity-model/

- VTI — https://vti.com.vn/capability-maturity-model-guide

- Octopus Deploy — https://octopus.com/blog/devops-uses-capability-not-maturity

- holistics +2 — https://www.holistics.io/blog/capability-models-not-maturity-models/

- Andrewclark — https://andrewclark.co.uk/product-book-summaries/accelerate

- Abinoda — https://abinoda.com/book/accelerate

- CyberSaint — https://www.cybersaint.io/blog/the-nist-cybersecurity-framework-implementation-tiers-explained

- NIST — https://nvlpubs.nist.gov/nistpubs/CSWP/NIST.CSWP.29.pdf

- Lean Agile Scotland — https://leanagile.scot/programme/maturity-mapping-using-wardley-maps-and-cynefin-create-context-specific-maturity-models

- Medium — https://medium.com/@chrisvmcd/mapping-maturity-create-context-specific-maturity-models-with-wardley-maps-informed-by-cynefin-37ffcd1d315

- Harvard Business Review — https://hbr.org/2004/06/capitalizing-on-capabilities

- Amazon — https://www.amazon.com/Accelerate-Software-Performing-Technology-Organizations/dp/1942788339

- Simon & Schuster — https://www.simonandschuster.com/books/Accelerate/Nicole-Forsgren-PhD/9781942788331

Commissioned from our research desk. Subject to final editorial discretion.